Just about 5 years in the past, Oak Ridge Nationwide Laboratory introduced the IBM-built Summit supercomputer, powered via IBM and Nvidia {hardware}, to the highest of the Top500 record. Now, the erstwhile HPC juggernaut is launching a brand new supercomputer that displays the corporate’s shift in path: Vela, an AI-focused, cloud-native supercomputer with Intel and Nvidia {hardware}.

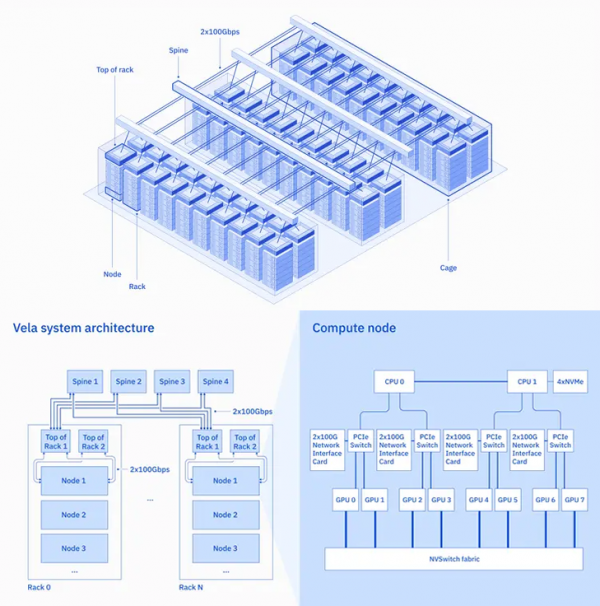

Specifications first. Every of Vela’s nodes is supplied with twin Intel Xeon “Cascade Lake” CPUs (particularly forgoing IBM’s personal Power10 chips, offered in 2021), octuple Nvidia A100 (80GB) GPUs, 1.5TB of reminiscence and 4 3.2TB NVMe drives. In its weblog submit pronouncing the device, IBM mentioned the nodes are networked by way of “more than one 100G community interfaces,” and that each and every node is attached to another top-of-rack transfer, each and every of which, in flip, is attached to 4 other backbone switches, making sure each sturdy cross-rack bandwidth and insulation from element failure. Vela is “natively built-in” with IBM Cloud’s digital personal cloud (VPC) setting.

Vela, which has been on-line since Might of final 12 months, is composed of 60 racks (consistent with Forbes) and an unspecified selection of nodes; then again, one could be vulnerable to believe the above diagram – correct in all different respects – and wager six nodes consistent with rack, for a complete of 360 nodes and a pair of,880 A100 GPUs.

IBM designed Vela with AI firmly in thoughts – and, particularly, the advance of basis fashions, which IBM describes as “AI fashions educated on a extensive set of unlabeled knowledge that can be utilized for plenty of other duties.” To that finish, the corporate opted for really extensive reminiscence at each stage: the larger-memory variant of the A100 and really extensive DRAM and NVMe, all fitted to caching AI coaching knowledge and comparable duties.

Apparently, IBM made the selection to permit digital device (VM) configuration on Vela, arguing that whilst bare-metal is most popular for AI functionality, VMs supply extra flexibility. To ameliorate the functionality affects, IBM says that they “devised a technique to disclose all the features at the node … into the VM,” lowering virtualization overhead to not up to 5%.

Within the announcement, IBM additionally made a number of pointed remarks vis-a-vis the weather of conventional HPC it was once eschewing with Vela. The authors wrote that “conventional supercomputers” with parts like high-performance networking {hardware} “weren’t designed for AI; they have been designed to accomplish smartly on modeling or simulation duties, like the ones outlined via the U.S. nationwide laboratories[.]” The authors even known as out Microsoft’s Azure AI supercomputer, constructed for OpenAI, for instance of a “conventional design” that drives “generation alternatives that building up value and restrict deployment flexibility.”

“Given our need to perform Vela as a part of a cloud, construction a separate InfiniBand-like community – only for the program – would defeat the aim of this workout,” the authors defined. “We had to persist with same old Ethernet-based networking that in most cases will get deployed in a cloud.”

For now, IBM is most effective providing Vela to the IBM Analysis group, with the corporate describing the device as its new “go-to setting” for IBM researchers running on AI. On the other hand, IBM additionally hinted that Vela is an evidence of thought for a bigger deployment plan.

“Whilst this paintings was once carried out with an eye fixed against handing over functionality and versatility for large-scale AI workloads, the infrastructure was once designed to be deployable in any of our international knowledge facilities at any scale,” the authors wrote. And: “Whilst the paintings was once carried out within the context of a public cloud, the structure is also followed for on-premises AI device design.”

Supply By means of https://www.hpcwire.com/2023/02/08/ibm-introduces-vela-cloud-ai-supercomputer-powered-by-intel-nvidia/